TL;DR:

- AI agents operate on endpoints, bypassing traditional security controls designed for human users, creating blind spots. Endpoint security enhances protection by monitoring behaviors, enforcing identity scopes, and enabling rapid containment of malicious activities. Implementing comprehensive telemetry, behavioral controls, and data loss prevention ensures trustworthy AI deployment and regulatory compliance.

AI agents are already executing multi-step workflows on developer workstations, CI/CD runners, and corporate laptops across your organization, and understanding why endpoint security matters for AI agents is not optional anymore. The problem is that most enterprise security stacks were built for users, not autonomous agents. Network firewalls, cloud access brokers, and browser-based controls monitor traffic flows and SaaS sessions. They don’t watch what an AI agent does when it reads a local file, generates a script, and calls an API, all without a human in the loop. That blind spot is where real risk lives.

| Point | Details |

|---|---|

| Endpoints are AI agent runtimes | AI agents run workflows locally where legacy network controls lack visibility, making endpoint security essential. |

| Reduce blast radius | Endpoint detection and response tools help contain damage by monitoring hosts you control and isolating threats rapidly. |

| Agent identity matters | Assigning scoped identities to AI agents and enforcing access at endpoints prevents privilege abuse and improves accountability. |

| Behavioral monitoring is critical | Dynamic action-sequence monitoring on endpoints detects complex AI agent behaviors that static posture checks miss. |

| Endpoint-native DLP protects data | Data loss prevention at the device level is vital as AI agents create and share sensitive data locally, bypassing perimeter protections. |

AI agents don’t live in the cloud. They execute on endpoints, and that distinction changes everything about how you need to protect them.

Traditional perimeter security assumes that sensitive activity passes through a chokepoint you control, typically a firewall or a proxy. AI agents break that assumption. They run locally, invoke operating system calls, read clipboard contents, write to local memory, and interact with local APIs. As endpoint AI agents increasingly operate outside traditional perimeter controls, legacy tools simply have no visibility into this local execution activity.

The coverage gap is specific and serious. Consider what an AI agent connected to your enterprise’s business process workflows can access locally:

This is not a theoretical gap. Enterprise deployments routinely place AI agents on the same machines where engineers have elevated privileges, access to production secrets, and connections to internal services. Without endpoint security controls watching that execution layer, you’re flying blind.

When an AI agent is compromised, the question shifts from “how did it happen” to “how much damage can it do before you stop it.” Endpoint security is the primary lever for keeping that answer small.

EDR visibility is significantly weaker on hosts you don’t directly control, which is why ensuring endpoint agent coverage on every controlled host is foundational. Here’s what that looks like in practice:

The blast radius concept matters here. An AI agent with broad file and network permissions, running on an unmonitored endpoint, can exfiltrate data, pivot to adjacent systems, or escalate privileges before any cloud-layer alert fires. Endpoint coverage closes that window.

Pro Tip: Map every host running an AI agent workflow and verify EDR coverage before you expand agent deployments. Gaps in coverage almost always align with high-privilege machines, and that’s exactly where attackers will focus.

Every AI agent is effectively an identity in your environment, and most organizations aren’t treating it that way yet.

“Every AI agent is an identity requiring scoped access; without proper controls, they become high-risk identity classes with broad credentials.”

The problem with AI agent identities is entitlement creep. Agents start with a defined set of permissions, but as workflows expand and integrations are added, those permissions accumulate. Six months into deployment, an agent that started with read access to one data store often holds credentials to a dozen systems. Without enforcement at the endpoint, identity governance policies that look clean on paper don’t reflect what agents can actually do locally.

Effective endpoint-enforced identity accountability requires:

Treating AI agents as a vague “service account” category is the mistake that leads to breaches. They are autonomous actors, and they need the accountability infrastructure to match.

Static posture checks tell you that an endpoint was compliant at a point in time. They don’t tell you what an AI agent did between check-ins. That’s the gap behavioral monitoring fills.

AI agent behavior is fundamentally nondeterministic. The same agent given the same prompt in two different contexts may take different action sequences. That variability makes signature-based detection nearly useless. Behavioral monitoring of AI agents, specifically watching action sequences and tool invocations over time, is the only reliable way to detect patterns that deviate from normal.

Here’s a practical implementation sequence:

Pro Tip: Start collecting endpoint telemetry before you deploy AI agents at scale. You need a baseline of “normal” to detect “abnormal,” and you can’t build that baseline retroactively.

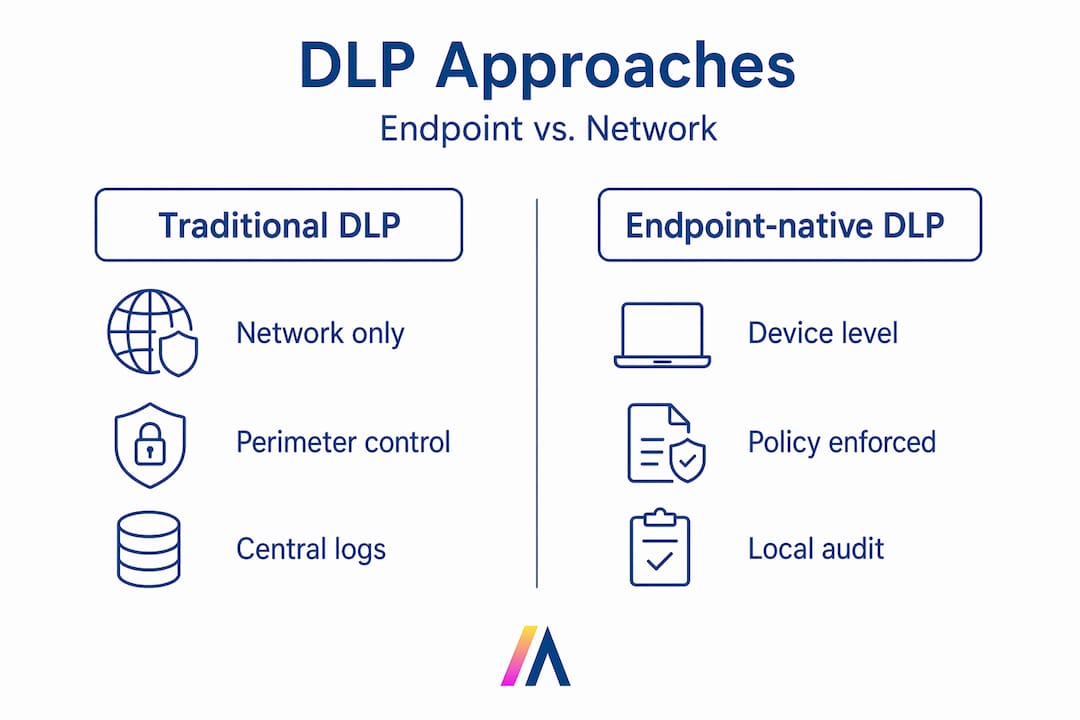

Traditional DLP inspects data as it crosses the network perimeter. AI agents routinely handle sensitive data entirely within the endpoint, transforming it, staging it, and sometimes exfiltrating it without that traffic ever looking suspicious at the network layer.

Endpoint DLP analyzes and classifies data locally on device, enforcing policy before data leaves the machine. That’s the critical difference for AI-specific workflows.

| Capability | Traditional perimeter DLP | Endpoint-native DLP |

|---|---|---|

| Where it inspects | Network traffic only | Inside the device, at the file and process level |

| AI agent coverage | Misses local file operations | Captures reads, writes, and transfers by agent processes |

| Policy enforcement timing | After data leaves the host | Before data leaves the host |

| Off-network protection | None | Full enforcement regardless of network connection |

| Context awareness | IP/port/protocol | Process identity, data classification, agent behavior |

| Response capability | Block or alert on traffic | Block operation, quarantine file, or terminate process |

For enterprises running AI agents that interact with compliance-sensitive data, this distinction is not academic. An agent that drafts a document containing customer PII and then uploads it to an unauthorized destination creates a breach. Endpoint DLP is your last line of defense at that decision point.

AI agent frameworks introduce a class of vulnerability that security teams are only beginning to grapple with: prompt injection leading to remote code execution (RCE). When a malicious actor embeds instructions in data that an agent processes, the agent can be directed to execute arbitrary code on the endpoint.

RCE vulnerabilities in AI agent frameworks can enable unauthorized actions that endpoint hardening and monitoring directly address. The defense posture requires multiple controls working together:

The key insight here: you don’t need to understand the AI-specific exploit to catch its consequences. Post-exploitation behavior looks the same whether it was triggered by a human attacker or a manipulated AI agent.

Regulatory and operational accountability for AI agents depends on one thing: reliable, detailed records of what they did, when, and why. Endpoint telemetry is the mechanism that makes that possible.

Emerging NIST standards require structured, machine-readable audit trails of agent behavior that endpoint logging can provide directly. Organizations that rely solely on application-layer logs will find those records incomplete when an incident or audit demands the full picture.

| Telemetry type | Data captured | Compliance value |

|---|---|---|

| Process telemetry | Agent process start, stop, parent-child relationships | Maps agent actions to specific workflow invocations |

| File system telemetry | File reads, writes, renames, deletions by agent process | Supports data handling accountability requirements |

| Network telemetry | Outbound connections, destination IPs, data volume | Enables detection of unauthorized data transfers |

| Authentication telemetry | Token use, API calls, identity assertions | Verifies agent acted within authorized scope |

| Script execution logs | Code written and executed by agent | Critical for detecting and investigating RCE exploits |

Integrating this telemetry with your zero-trust audit infrastructure creates the forensic trail that compliance teams and incident responders need. Start building it now, before regulators or auditors ask for it.

Here’s the uncomfortable truth that most endpoint security vendors won’t tell you directly: the security industry is about to repeat the same mistake it made in the early cloud era.

When enterprises first moved workloads to the cloud, the instinct was to retrofit existing tools, applying network security paradigms to an environment that didn’t work that way. The result was years of blind spots, breaches, and catch-up investment. Today, most enterprises incorrectly treat AI agents like traditional applications instead of autonomous identities requiring comprehensive guardrails enforced from the endpoint.

Reactive detection is not enough. If your endpoint strategy for AI agents is “we’ll detect and respond when something bad happens,” you’ve already accepted a breach. The right posture is to enforce what agents can do before you monitor what they are doing. Guardrails first, telemetry second, response third.

Behavioral monitoring must move beyond posture to real-time, sequence-aware detection that uses endpoint telemetry for genuine insight. That’s a higher bar than most security teams have set for traditional workloads, and it needs to be, because AI agents act faster and with less human review than any previous class of software.

The organizations that will deploy AI agents with confidence aren’t the ones that built the biggest incident response team. They’re the ones that built proactive endpoint enforcement into their AI architecture from day one, combining identity controls, behavioral telemetry, endpoint DLP, and runtime hardening into a single coherent layer. That combination doesn’t just protect your enterprise. It creates the conditions for AI agents to operate with genuine autonomy, because your controls can verify their behavior is trustworthy.

Endpoint security, reimagined for AI agents, is what turns AI deployment from an executive risk conversation into an operational confidence builder.

Deploying AI agents across enterprise workflows demands more than good intentions about security. It demands architecture that enforces controls, captures telemetry, and proves compliance from the moment agents go live.

Hymalaia’s enterprise AI platform is built with exactly this foundation in mind. The platform integrates scoped identity governance, behavioral monitoring, and real-time data protection across every agent feature and workflow it powers. Whether you’re running agents across Salesforce, Slack, SharePoint, or your proprietary data sources, Hymalaia gives your security team the visibility and controls needed to deploy with confidence, not caution. For teams thinking about AI business continuity planning alongside security architecture, that integrated posture matters more than any single control. Book a demo today and see how secure AI deployment actually works in practice.

AI agents operate at the endpoint with autonomous access to sensitive files and processes, bypassing traditional perimeter controls that only monitor network traffic and cloud sessions. Unlike standard software, agents make decisions and take actions without human review at each step.

Endpoint coverage enables rapid containment by detecting suspicious agent behavior in real time and isolating affected hosts before lateral movement or data exfiltration can spread across the environment. The faster the isolation, the smaller the blast radius.

Every AI agent needs scoped access to prevent high-risk credential accumulation over time, and endpoint enforcement ensures those access boundaries are respected even when agents execute locally without human oversight.

No, because endpoint DLP performs local classification and blocks unauthorized data transfers before they reach the network, which is the only point of control for agents that create and handle sensitive data entirely within the device.

NIST’s agent evaluation probes require structured, machine-readable audit records of agent behavior, and endpoint telemetry is the primary source for that level of detail, capturing process, file, network, and authentication events tied to each agent identity.