TL;DR:

- Responsible enterprise AI involves active governance, risk management, and operational controls across the entire AI lifecycle. It emphasizes accountability, transparency, fairness, safety, privacy, and inclusiveness as interconnected principles guiding responsible deployment. Organizations must integrate these principles into daily practices, compliance is not enough, and proactive due diligence aligns with evolving global regulations.

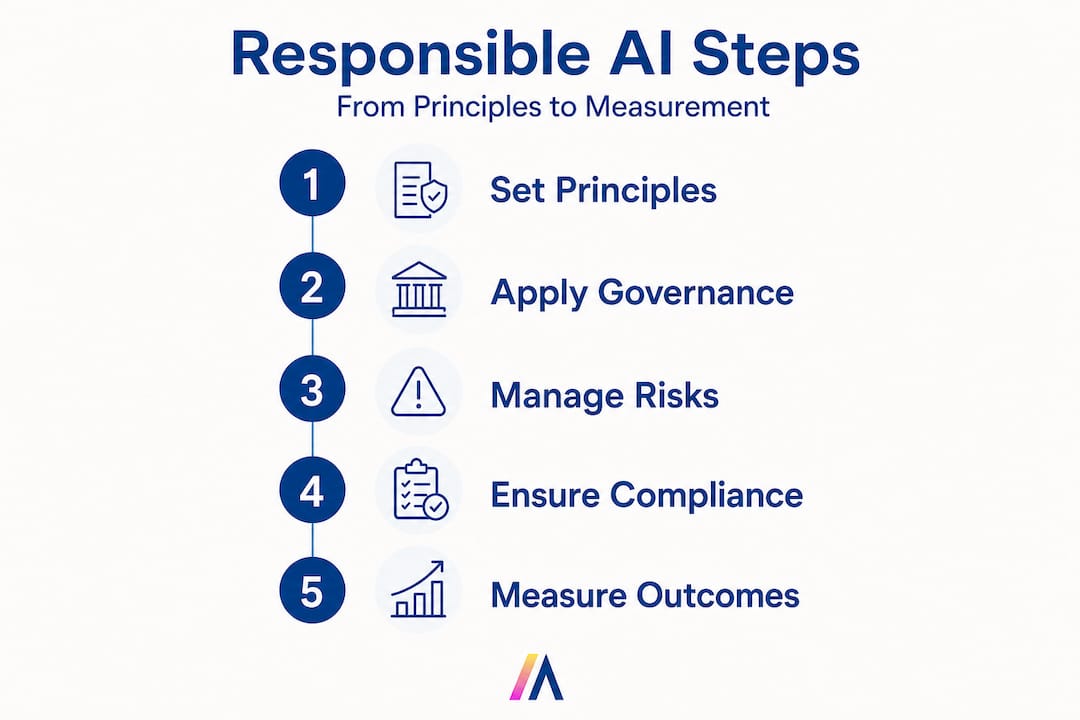

Responsible enterprise AI is not a policy document you file and forget. Most organizations treat it as a set of ethical guidelines, a PR commitment, or a compliance checkbox. That framing is dangerously incomplete. Responsible AI is the organizational approach to developing, assessing, and deploying AI systems with safety, ethics, and trust as explicit requirements across the full AI lifecycle. For enterprise leaders and IT managers, that means accountability structures, governance controls, risk management protocols, and measurable outcomes, not just good intentions.

| Point | Details |

|---|---|

| Responsible AI goes beyond ethics | It requires lifecycle-wide operational controls, governance, and accountability for large organizations. |

| Frameworks and risk management | Practical adoption depends on frameworks like NIST and OECD, supported by enforceable policies and cross-functional governance. |

| Benchmarks are emerging | Measurement and procurement of responsible AI solutions remain challenging due to evolving and incomplete benchmark standards. |

| Due diligence is critical | Enterprises must proactively identify and mitigate AI risks, meeting both internal expectations and external regulatory demands. |

With that context, let’s clarify exactly what “responsible enterprise AI” means, and what it demands from organizations.

Microsoft frames Responsible AI as a set of principles that guide every decision, from defining the system’s purpose to how it interacts with end users. These six principles are not abstract ideals. Each one translates directly into operational requirements for your teams.

Here is how each principle maps to enterprise practice:

| Principle | Definition | Practical implication |

|---|---|---|

| Fairness | AI systems treat all people equitably | Bias audits, diverse training data, outcome monitoring |

| Reliability & safety | Systems perform as intended without harmful failures | Rigorous testing, fallback mechanisms, incident response |

| Privacy & security | Data is protected throughout the AI lifecycle | AES-256 encryption, RBAC, data minimization policies |

| Inclusiveness | AI benefits and is accessible to all relevant users | Accessibility standards, multilingual support, UX testing |

| Transparency | Decisions and processes are explainable | Model documentation, audit logs, explainability tools |

| Accountability | Clear ownership of AI outcomes exists | Defined roles, escalation paths, governance committees |

These principles of responsible enterprise AI are interdependent. You cannot achieve real accountability without transparency. You cannot guarantee reliability without privacy controls. They form a system, not a checklist.

“Responsible AI requirements must be explicit at every stage of the AI lifecycle, from design and development through deployment and ongoing monitoring. Treating them as an afterthought creates compounding risk.”

Consider fairness in practice. A large financial services firm deploying an AI-powered credit scoring model must not only ensure the algorithm does not discriminate based on protected attributes. It must also monitor outcomes continuously, retrain models when drift is detected, and document every change. That is a sustained operational commitment, not a one-time review.

Reliability and safety deserve equal attention. Enterprise AI systems often operate in high-stakes environments, from supply chain decisions to clinical support tools. A failure mode that seems minor in testing can cascade into significant operational or reputational damage at scale. Building in circuit breakers, human-in-the-loop review steps, and rollback capabilities is not optional. It is foundational.

Once the core principles are clear, the next question is how those ideals become daily reality for complex enterprises.

For large organizations, responsible AI operationalization typically means governance plus risk management that can be executed across roles, processes, and controls. Governance defines the rules. Risk management identifies and mitigates what can go wrong when those rules are tested.

Here are the essential governance mechanisms every enterprise should establish:

The distinction between governance controls and risk controls matters for resource allocation and accountability:

| Control type | Focus | Examples |

|---|---|---|

| Governance controls | Defining rules and ownership | Policies, standards, roles, review boards |

| Risk controls | Identifying and mitigating threats | Risk assessments, model validation, monitoring alerts |

| Compliance controls | Meeting external requirements | Regulatory mapping, audit trails, documentation |

Pro Tip: Build cross-functional AI responsibility from the start, not after your first incident. Organizations that involve legal, HR, and business unit leaders in AI governance from day one see significantly faster issue resolution and stronger stakeholder trust. Retrofitting governance after deployment is expensive and disruptive.

Referencing the zero trust security guide is useful here. Zero trust principles, where no user, system, or data source is trusted by default, map directly onto responsible AI governance. Every model interaction, data access event, and API call should be authenticated, logged, and subject to least-privilege controls.

Governance inside the organization is only part of the puzzle. Responsible AI is increasingly defined by outside expectations too.

Enterprise Responsible AI connects directly to due diligence expectations: enterprises are expected to proactively identify and address adverse impacts from AI systems they develop or use. This is not just a best practice. It is becoming a legal and regulatory expectation in multiple jurisdictions.

Due diligence in AI means going beyond your own organization’s walls. It requires understanding the full AI value chain, including third-party models, training data sources, and downstream deployment contexts.

Enterprise duties under responsible AI due diligence include:

“Due diligence in responsible AI is not the same as compliance. Compliance asks ‘did we follow the rules?’ Due diligence asks ‘did we proactively prevent harm?’ The bar is higher, and the expectation is ongoing.” (OECD framing)

Pro Tip: Start your AI value chain mapping with a simple inventory. List every AI system in production, the data sources it uses, the vendor or team that built it, and the business process it affects. This inventory becomes the foundation for impact assessments, audit responses, and regulatory disclosures. Most organizations are surprised by how many AI systems are already running without formal documentation.

The global regulatory landscape is accelerating. The EU AI Act introduces risk-based obligations for high-risk AI systems, including mandatory conformity assessments and post-market monitoring. The UK’s AI Safety Institute is developing evaluation frameworks. In the United States, sector-specific guidance from financial regulators and the FDA is shaping responsible AI expectations in banking and healthcare. Compliant enterprise AI deployments require you to track these developments continuously, not just at contract renewal time.

Interoperability between frameworks is also critical. The NIST AI Risk Management Framework, ISO/IEC 42001, and the OECD AI Principles are designed to complement each other. Organizations that map their controls across multiple frameworks avoid duplicating effort and demonstrate broader assurance to regulators and partners.

After understanding principles and regulatory requirements, leaders must also grasp how AI responsibility is measured and assured. Here the picture is still evolving.

Benchmarks for responsible AI are emerging, but standardized, widely accepted evaluation suites for responsible criteria are not yet settled. This creates a real challenge for enterprise procurement teams, risk officers, and boards who need to compare AI systems and validate vendor claims.

The current benchmark landscape includes:

Here is how current benchmarks compare for enterprise use:

| Benchmark | Coverage | Primary focus | Key limitation |

|---|---|---|---|

| HELM Safety | Broad | Toxicity, bias, robustness | Limited enterprise context |

| AIR-Bench | Moderate | Safety, alignment | Still maturing |

| TruthfulQA | Narrow | Factual accuracy | Single dimension only |

| BBQ | Narrow | Social bias | QA-specific, not general |

The measurement gap matters. Without unified benchmarks, enterprise buyers cannot make apples-to-apples comparisons between AI vendors. A vendor claiming their model is “safe” may be using a proprietary evaluation that is not independently verifiable. This creates procurement risk and erodes board-level confidence in AI investments.

Only a small fraction of enterprise AI procurement processes currently require vendors to submit results from standardized responsible AI evaluations. This gap is closing, but slowly.

The practical implication for your organization is this: do not wait for universal standards to emerge before you measure. Build your own internal evaluation criteria based on the principles outlined above. Use available benchmarks as one input, not the only input. And require vendors to provide AI risk management features and documentation that support your own assessment process.

The absence of universal benchmarks also means that transparency becomes a competitive differentiator. Organizations that proactively document their responsible AI practices, publish model cards, and share audit results build trust faster than those that wait for regulators to mandate disclosure.

With all the external pressure and moving standards, it is easy to treat responsible AI as a compliance tick-box. Submit the impact assessment, appoint an ethics officer, reference the NIST framework in your annual report. Done. But that approach misses the point entirely, and it misses the opportunity.

The organizations that get the most value from responsible AI are not the ones with the most elaborate policy documents. They are the ones where every engineer asks “what could go wrong with this feature?” before shipping, where every sales team member understands why certain AI-generated recommendations require human review, and where every product decision is stress-tested against fairness and safety criteria.

Responsible AI cannot live only in the compliance function. When it does, it becomes reactive. Teams work around the process, documentation lags behind reality, and the first time a model causes a real-world problem, the governance framework turns out to be a paper shield.

The more effective model is distributed accountability. That means training engineers on bias detection and model documentation. It means giving customer support teams the language to explain AI-driven decisions to affected users. It means rewarding product managers who flag responsible AI concerns early, even when it delays a launch. Securing AI systems is a useful analogy here. Security practices that exist only as policies fail. Security practices that influence daily developer behavior, code review standards, and deployment gates actually reduce risk.

The challenge we put to enterprise leaders is direct: how does your organization reward practical, responsible choices at every level? If the only incentive is speed to deployment, responsible AI will always lose to the deadline. Build it into performance criteria, design reviews, and vendor selection. That is when it becomes real.

Ready to put responsible AI into practice?

Understanding the frameworks is one thing. Operationalizing them across a large, complex organization is another. Hymalaia’s enterprise AI platform is built to help you do exactly that, from governance-ready deployment architecture to real-time audit logging and role-based access controls that enforce your responsible AI policies automatically.

Whether you are deploying AI agents across sales, operations, or technical management, Hymalaia’s comprehensive AI features support the full responsible AI lifecycle. That includes RAG-powered responses grounded in your verified enterprise data, GDPR-compliant data handling, and integrations with over 50 enterprise tools that keep your AI outputs synchronized with your source-of-truth systems. For teams that need structured support in building governance frameworks and training staff, our AI consulting and training services provide hands-on guidance tailored to your industry and risk profile. 🏔️

Responsible enterprise AI requires operational controls, governance, and risk management at all lifecycle stages, not just adherence to ethical principles. It means accountability structures, measurable outcomes, and enforceable policies, not just stated values.

Common frameworks include the NIST AI Risk Management Framework and the OECD due diligence guidance, both of which provide actionable steps for large organizations to manage AI risk systematically.

Standardized evaluation suites for responsible AI are still not settled, though emerging tools like HELM Safety and AIR-Bench are leading efforts to establish common measurement standards.

Due diligence ensures that enterprises proactively identify and address negative impacts caused by their AI systems, as global regulators increasingly expect, going beyond reactive compliance to active harm prevention.