TL;DR:

- Most enterprise security methods are insufficient for AI because AI systems operate across multiple boundaries with implicit trust. Zero Trust replaces this trust assumption with continuous verification, rigorous identity management, and boundary controls tailored to AI workflows. Implementing Zero Trust principles ensures AI security, compliance, and accountability at enterprise scale.

Most enterprise security teams believe that strong network controls, endpoint protection, and identity management are enough to secure AI deployments. They are not. AI systems operate differently from traditional software, and that difference creates exploitable gaps that classic security models were never designed to close. This guide explains exactly why Zero Trust is the right framework for AI, how it maps to the unique trust boundaries that AI creates, and what practical steps your organization can take to protect models, agents, and data at enterprise scale.

| Point | Details |

|---|---|

| AI amplifies security risks | Traditional models cannot protect distributed, autonomous AI workflows in large organizations. |

| Continuous verification is essential | Zero Trust replaces implicit trust with explicit checks for every action involving AI. |

| Trust boundaries must be mapped | Security teams should identify user-agent, model-data, and automation boundaries and enforce controls at each. |

| Auditability improves compliance | Zero Trust makes audit and regulatory mapping easier by logging every access and automating evidence gathering. |

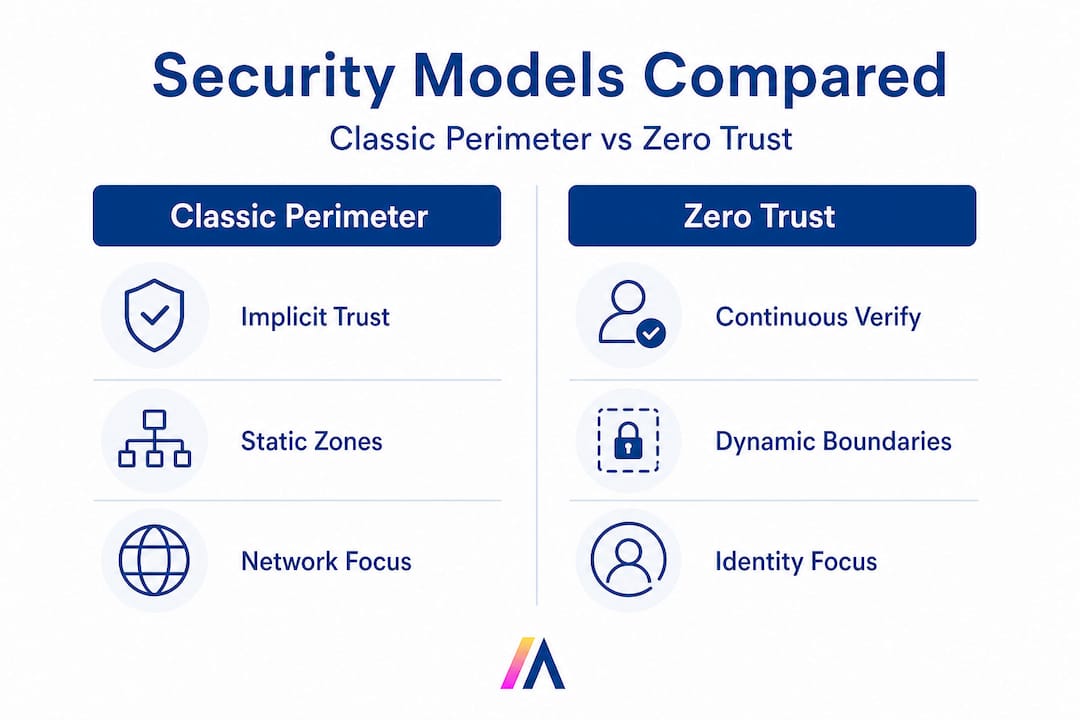

Traditional security architecture was built around a clear idea: defend the perimeter, verify who enters, and then trust what happens inside. That model worked reasonably well when applications were monolithic, data lived in predictable locations, and users accessed systems through known endpoints.

AI breaks every one of those assumptions.

Modern enterprise AI involves dynamic, interconnected components: foundation models, retrieval pipelines, autonomous agents, orchestration layers, and dozens of integrated data sources. An AI agent querying your Salesforce CRM, summarizing data from SharePoint, and triggering a workflow in Slack is not operating inside a perimeter. It is operating across many perimeters simultaneously, often with elevated data access that no human user would be granted in a single session.

Classic perimeter security models cannot address distributed and agentic AI workflows. The risk is not theoretical. When an AI agent has implicit trust after initial authentication, a compromised prompt, a misconfigured integration, or an over-permissioned model can silently exfiltrate sensitive data or execute unauthorized actions without triggering any traditional alarm.

The specific risks that emerge when classic security meets AI include:

Prompt injection attacks, where malicious inputs manipulate agent behavior by exploiting implicit trust in model outputs

Lateral data exposure, where an agent authorized for one task retrieves data far outside its intended scope

Unaudited automation, where AI workflows execute consequential actions with no human checkpoint or log trail

Third-party model risk, where external model APIs introduce unverified trust relationships into your environment

Agent identity ambiguity, where automated processes have no distinct identity and therefore no enforceable access policy

Each of these risks shares a root cause: implicit trust. The moment any component in your AI stack is trusted by default, you have a gap. An enterprise AI security platform designed around Zero Trust eliminates that gap by requiring verification at every step, for every component, every time.

Zero Trust is not a product. It is a security philosophy and architecture that replaces implicit trust with continuous, context-aware verification. Applied to AI, it means no model, agent, user, or automated process is trusted simply because it authenticated once or operates inside your infrastructure.

Operationally, enterprises implement Zero Trust for AI by requiring explicit, continuous verification for all actions involving models, prompts, and data. NIST’s guidance on Zero Trust architecture is especially relevant here because it was designed with distributed, hybrid environments in mind, which maps directly to how enterprise AI systems are structured today.

The core pillars of a Zero Trust architecture for AI are:

Strong identity and authentication for every entity, including users, agents, models, and automated processors

Granular access control that limits what each identity can access, query, or execute based on the principle of least privilege

Continuous monitoring of all actions, data flows, and model interactions in real time

Full auditability, with immutable logs that capture who or what did what, when, and why

Here is how Zero Trust compares to classic perimeter security when applied to AI environments:

| Feature | Classic perimeter model | Zero Trust model |

|---|---|---|

| Trust basis | Location or network membership | Continuous identity and context verification |

| Access control | Broad, role-based after login | Granular, least-privilege, per-action |

| AI agent identity | Not addressed | Explicit identity assigned to each agent |

| Data access scope | Often over-permissioned | Scoped to task and verified continuously |

| Monitoring | Perimeter-focused, periodic | Real-time, full-stack, including model interactions |

| Breach mitigation | Relies on perimeter holding | Assumes breach; limits blast radius through segmentation |

| Compliance readiness | Partial, network-level only | Full lifecycle, including AI-specific governance |

The shift from the left column to the right column is not just architectural. It requires rethinking AI identity management from the ground up, treating agents and models as first-class security principals rather than invisible background processes.

Pro Tip: Continuously map identities not just to users, but to agents, models, and automated processors in the AI stack. Every entity that touches data or executes an action needs a verifiable identity, a defined scope, and a logged record of its activity.

One of the most important concepts in Zero Trust for AI is the trust boundary. A trust boundary is any point where data, control, or authority passes from one entity or component to another. In traditional IT, trust boundaries are relatively simple: user to application, application to database. In AI, they multiply rapidly.

Organizations must define and enforce trust boundaries between users and agents, models and data, and humans and automation for effective AI security. Failing to map and control these boundaries is where most enterprise AI security programs fall apart.

Here are the primary AI trust boundaries and the risks they introduce:

| Trust boundary | Associated risk | Recommended control |

|---|---|---|

| User to agent | Unauthorized task execution, privilege escalation | RBAC with per-session scope limits |

| Agent to model | Prompt injection, model manipulation | Input validation, sandboxed execution |

| Model to data | Data leakage, over-retrieval | Attribute-based access control on data sources |

| Human to automation | Unaudited consequential actions | Human-in-the-loop checkpoints for high-impact tasks |

| Agent to external API | Third-party data exposure | API allowlisting, encrypted channels, rate limiting |

Implementing boundary controls across multi-component AI workflows requires a structured approach. Here are the five steps to get it right:

Map all interactions. Document every point where data moves between components: user inputs, model queries, agent actions, data retrievals, and external API calls.

Assign explicit identities. Every agent, model endpoint, and automated process must have a unique, verifiable identity tied to your identity provider.

Define allowed actions. For each identity, specify exactly what it can access, query, or execute. Default to deny; explicitly permit only what is necessary.

Set continuous monitoring points. Deploy logging and anomaly detection at every boundary. Real-time alerts for out-of-scope access or unusual data volumes are essential.

Document accountability. For every high-impact action, there must be a clear record of which identity initiated it, what data it touched, and what outcome it produced.

Skipping any of these steps creates exploitable gaps. Prompt injection attacks, for example, are largely a boundary control failure: the model-to-data boundary is not enforcing input validation, so a malicious instruction can redirect the agent’s behavior entirely. Boundary discipline is not optional in enterprise AI deployments.

Regulatory pressure on AI is accelerating. GDPR already imposes obligations on automated decision-making. HIPAA requires strict data access controls for health information. CPRA extends consumer rights to AI-driven profiling. In every case, the compliance question is the same: can you prove what your AI did, why it did it, and who authorized it?

Zero Trust supports compliance by improving auditability and enforcing the access controls and monitoring needed for regulations. But compliance for AI goes further than traditional IT compliance. Automated actions, third-party model usage, and decisions that are difficult to explain all require governance measures that Zero Trust enables but does not automatically provide.

Zero Trust for AI requires monitoring and governance across the entire AI lifecycle, from data ingestion and model training to inference, agent execution, and output delivery. That means your compliance program needs to extend into every layer of the AI stack, not just the network or identity layer.

The top audit and monitoring practices for Zero Trust AI include:

Identity logs that record every authentication event for users, agents, and models, with timestamps and context

Agent action logs that capture every task an agent executes, including the data it accessed and the outputs it produced

Data usage reporting that tracks which datasets were queried, by which identity, under which policy

Model interaction logs that record prompts, completions, and any flagged anomalies in model behavior

Policy change audit trails that document every modification to access controls, agent permissions, or governance rules

Pro Tip: Maintain a clear chain of responsibility for all high-impact AI actions to ensure audit trails and legal continuity. When a regulator or auditor asks who authorized an AI-driven decision, you need a specific, documented answer, not a general reference to “the system.”

Mapping Zero Trust controls to AI-specific compliance obligations requires deliberate effort. For GDPR Article 22, which governs automated decision-making, you need logs that show what data the model used, what the decision was, and whether a human review option was available. For HIPAA, you need attribute-based access controls that prevent AI agents from retrieving protected health information outside their authorized scope. Zero Trust provides the infrastructure for these controls. Your governance team must configure and maintain them.

Zero Trust enforces verification. It does not enforce judgment. As AI agents become more autonomous, executing multi-step workflows with real business consequences, the need for human oversight does not diminish. It intensifies.

“Agentic AI increases the importance of runtime governance and human accountability for high-impact actions.”

Agentic AI workflows present governance challenges that pure technical controls cannot fully address. An agent that can autonomously send emails, update records, trigger payments, or escalate support tickets is operating with authority that carries real accountability. If that agent makes an error or is manipulated, the question of who is responsible must have a clear answer.

Effective runtime governance requires assigning human owners to every critical agent function. That means a named person or team is accountable for the agent’s behavior, its outputs, and any incidents it causes. This is not just a compliance requirement. It is a practical necessity for maintaining organizational trust in AI systems.

Here are the steps to effective runtime governance for agentic AI:

Pre-deployment checklists. Before any agent goes live, verify that its identity is registered, its permissions are scoped, its actions are logged, and a human owner is assigned.

Real-time action logging. Every action an agent takes must be logged with full context: the triggering input, the data accessed, the action executed, and the output produced.

Threshold-based human checkpoints. Define categories of high-impact actions (financial transactions above a set value, data deletions, external communications) that require human approval before execution.

Incident triage protocols. When an agent behaves unexpectedly, your team needs a documented process to isolate the agent, review its logs, identify the root cause, and restore normal operations.

Periodic governance reviews. Agent permissions and behaviors should be reviewed on a regular schedule, not just at deployment. As your data environment evolves, agent access policies must evolve with it.

Zero Trust and runtime governance are complementary, not competing. Zero Trust ensures that every action is verified and logged. Runtime governance ensures that humans remain accountable for what those verified, logged actions actually accomplish.

Here is the uncomfortable truth that most security frameworks avoid saying directly: Zero Trust, applied rigorously, is the minimum viable security posture for enterprise AI. It is not a competitive advantage. It is the baseline that every organization deploying AI agents at scale should already be operating from.

The real differentiation comes from what you build on top of that baseline. Organizations that treat Zero Trust as a checkbox, implementing identity controls and logging without genuinely enforcing least-privilege access or reviewing audit trails, are creating a false sense of security that is arguably more dangerous than no framework at all.

We have seen enterprises deploy sophisticated AI platforms with strong perimeter controls and then grant their AI agents broad, persistent access to every connected data source because “it’s easier to configure.” That single decision undermines every other security investment. Zero Trust only works when the principles are enforced consistently, especially at the boundaries that feel inconvenient to lock down.

The organizations that get this right treat their AI agents the way they treat privileged human users: with verified identities, scoped permissions, continuous monitoring, and clear accountability. That discipline is what separates a genuinely secure AI deployment from one that is waiting for an incident.

If you are ready to move from security principles to a production-ready AI deployment, Hymalaia is built for exactly this challenge. Our platform deploys autonomous AI agents across your enterprise with role-based access controls, AES-256 encryption, GDPR-compliant data handling, and full audit logging built in from day one.

Hymalaia connects with over 50 enterprise tools including Salesforce, Slack, Google Workspace, and SharePoint, giving your agents access to the data they need while enforcing the boundaries they must respect. Every agent action is logged, every identity is verified, and every data access is scoped to the task at hand. Whether you deploy on cloud, on-premise, or hybrid infrastructure, you get the governance controls that Zero Trust demands and the productivity gains your teams are waiting for. Book a demo today and see what secure, scalable agentic AI looks like in practice.

Zero Trust improves AI security by requiring continuous verification for every access and action, closing gaps left by traditional network or perimeter-based defenses that assume trust after initial login.

Zero Trust supports many compliance requirements, but AI-specific obligations require additional controls. Zero Trust supports regulatory compliance via identity verification, strict access controls, and auditing, but your governance framework must map those controls to AI-specific obligations like automated decision documentation.

AI-specific trust boundaries reflect interactions like user-agent and model-data. Defining these boundaries and implementing controls across them is essential to prevent data leakage, prompt injection, and unauthorized agent actions.

Zero Trust requires detailed logging of every action, which creates auditable trails for AI model usage, data access, and agent decisions. Zero Trust supports compliance through monitoring and governance across the full AI lifecycle, giving auditors the evidence they need.