TL;DR:

- A single AI chatbot incident exposed sensitive data and resulted in significant regulatory fines due to data-in-use gaps. Traditional encryption methods fail during AI processing, necessitating advanced solutions like fully homomorphic encryption and trusted execution environments for comprehensive data protection. Implementing layered, adaptive security architectures ensures compliance and mitigates risks across the entire AI data lifecycle.

A single AI chatbot incident exposed 1,247 PII records and triggered $412,000 in regulatory fines, not because the system lacked perimeter security, but because sensitive data was unprotected at the exact moment the AI processed it. That is the data-in-use gap, and it is the most exploited blind spot in enterprise AI deployments today. Most organizations invest heavily in encrypting data at rest and in transit, then leave it completely exposed the moment a model touches it. This article walks you from that vulnerability through the full encryption lifecycle, including emerging methods like fully homomorphic encryption (FHE), so you can make informed, defensible decisions about AI data security.

| Point | Details |

|---|---|

| Data-in-use vulnerability | Sensitive data is most exposed during AI processing unless protected by in-use encryption. |

| Beyond traditional encryption | AES and TLS secure storage and transit, but only advanced methods like FHE secure data-in-use. |

| Compliance best practices | Pair encryption with monitoring, access controls, and verification to satisfy GDPR and HIPAA. |

| Performance is improving | Modern homomorphic encryption now supports enterprise application speeds, reducing prior bottlenecks. |

| Practical implementation | Adopt hybrid encryption and runtime checks for robust AI security and operational excellence. |

Enterprise AI platforms process enormous volumes of sensitive data: customer records, financial transactions, health information, proprietary intellectual property. Traditional security models were designed for databases and file systems, not for environments where models actively compute on that data in real time.

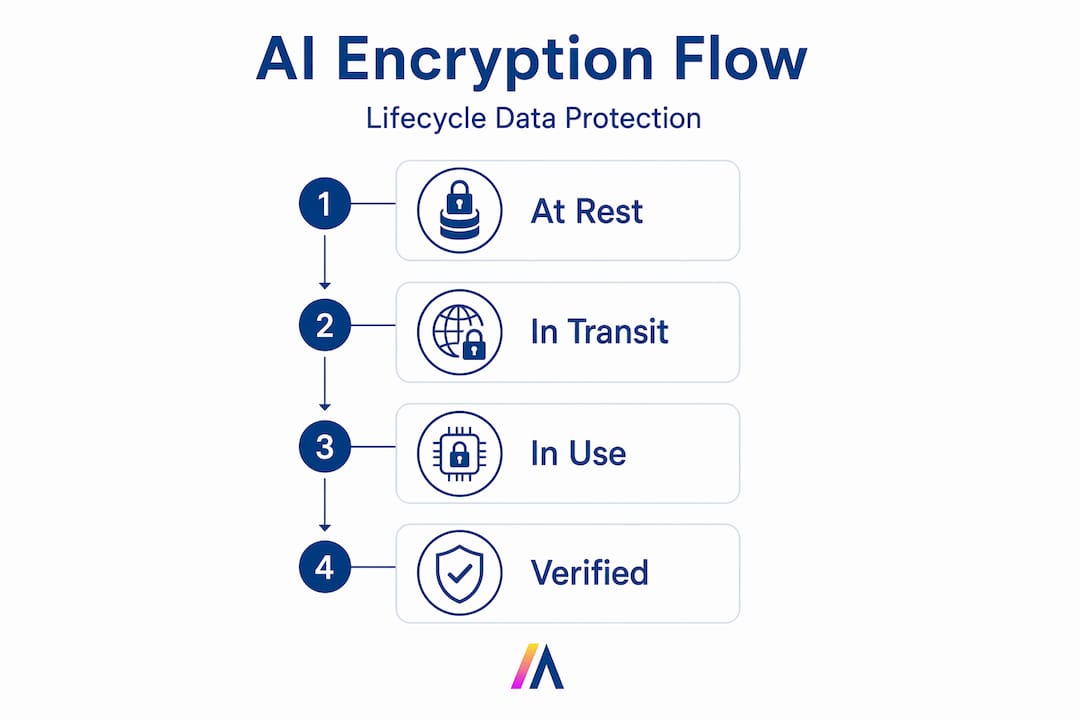

Understanding the three states of data is foundational:

The data-in-use gap is where traditional encryption protections fail during AI model processing, training, and inference. The moment data enters an AI runtime, standard encryption is stripped away so the processor can work with it. That window, even if milliseconds long, is a real attack surface.

“Protecting data while it is being actively processed is the defining challenge of enterprise AI security. Standard at-rest and in-transit protections are necessary but insufficient once AI models enter the equation.” — AI security researchers, 2025

The risks during this exposed window are more serious than most IT leaders realize. Adversarial attacks can inject malicious inputs designed to manipulate model outputs. Model poisoning can corrupt training data before it is written back to protected storage. Insider threats can intercept memory dumps during inference. These are not theoretical scenarios. They are documented attack vectors actively exploited in production environments.

CISA and NIST recommend continuous encryption across the full AI lifecycle, covering data at rest, in transit, and critically in use, as protection against breaches, model poisoning, and adversarial attacks. Their guidance is explicit: AI platforms require a fundamentally different security architecture than traditional enterprise software.

Layering AI security with zero trust AI security principles adds another critical dimension. Zero trust assumes no request is inherently safe, even from authenticated internal systems. In an AI context, that means every inference call, every training batch, every model update must be verified and monitored continuously.

Most enterprise environments already deploy a solid baseline of encryption standards. The question is not whether these standards are good, it is whether they cover everything an AI platform demands.

Standard encryption methodologies for AI environments include:

Here is where the architecture breaks down. Every one of these methods requires decrypting data before computation. You cannot run a neural network inference on ciphertext using AES-256. The processor must see plaintext. That requirement creates the exposure window.

| Encryption method | Data at rest | Data in transit | Data in use | Enterprise maturity |

|---|---|---|---|---|

| AES-256 | ✅ Strong | ❌ Not applicable | ❌ Requires decryption | Very high |

| TLS 1.3 | ❌ Not applicable | ✅ Strong | ❌ Requires decryption | Very high |

| FIPS 140-3 modules | ✅ Strong | ✅ Strong | ❌ Requires decryption | High |

| Hardware TEEs | ✅ Partial | ✅ Partial | ✅ Strong | Medium |

| Fully Homomorphic Encryption | ✅ Inherent | ✅ Inherent | ✅ Native | Emerging |

| Optical spatial encryption | ✅ Partial | ✅ Partial | 🔄 Experimental | Early stage |

Traditional encryption fails for data-in-use precisely because it must decrypt data before processing. Fully Homomorphic Encryption addresses this, though computational overhead remains high but is improving rapidly. Trusted Execution Environments (TEEs), hardware-isolated memory regions, offer a practical intermediate solution but provide less comprehensive coverage than FHE.

Optical spatial encryption represents an emerging physical-layer approach that protects data through the optical encoding of spatial patterns, particularly promising for high-throughput AI inference workloads but still in early enterprise adoption stages.

Pro Tip: Do not manage encryption keys separately from your broader enterprise AI risk frameworks. Integrate KMS rotation policies, key access logging, and anomaly detection into a unified governance layer. Fragmented key management is one of the most common sources of compliance gaps in AI deployments.

Fully Homomorphic Encryption (FHE) is the most significant cryptographic breakthrough for AI security in the past decade. The concept is straightforward in principle, even if the mathematics are complex: FHE allows computation directly on encrypted data without ever decrypting it.

FHE enables AI inference on sensitive data without exposing it to the compute layer, making it vital for use cases involving healthcare records, financial data, and regulated personal information. A model can generate a prediction, classify a document, or summarize a report, all while the underlying data remains mathematically encrypted throughout.

The practical challenges have historically been significant:

The performance picture is changing fast. H33 has demonstrated 198 billion FHE operations per day on a single node, with breakthroughs yielding an 80x speedup in matrix operations critical for AI workloads. Those benchmarks represent a genuine inflection point. Enterprise-scale FHE is moving from theoretical to operational.

Comparative approaches for data-in-use protection:

| Approach | In-use protection | Performance impact | Enterprise readiness |

|---|---|---|---|

| Hardware TEEs (Intel SGX, AMD SEV) | Strong | Low to medium | Ready now |

| FHE standalone | Comprehensive | High (improving) | Near-term |

| FHE-TEE hybrid | Comprehensive | Medium | Emerging |

| Scheme switching (CKKS + BFV) | Strong | Medium | Experimental |

| Optical spatial encryption | Partial | Very low | Early research |

Spatial encryption advances at the optical layer are drawing increasing research attention, particularly for inference pipelines where data must traverse high-bandwidth interconnects between AI accelerators. These physical-layer approaches complement rather than replace cryptographic methods.

For most enterprise AI leaders evaluating their 2026 roadmap, TEEs offer the most practical immediate path to in-use protection. FHE-TEE hybrids will become the standard architecture as FHE tooling matures. Planning for that transition now, rather than retrofitting later, is the strategically sound move.

Knowing what encryption methods exist is necessary. Knowing how to select, combine, and operationalize them for GDPR, HIPAA, and SOC 2 compliance is what separates secure enterprises from vulnerable ones.

Enterprise IT leaders should prioritize FHE and TEE hybrids for compliance-grade AI deployments, integrating these with Zero Trust architecture and Data Security Posture Management (DSPM) per Gartner and Forrester guidance. That is not a theoretical recommendation. It is a practical framework that maps directly to regulatory requirements.

Here is a structured approach for implementation:

Audit your current encryption coverage. Map every AI data flow: training pipelines, inference APIs, model storage, and output logs. Identify where decryption occurs and for how long. This audit typically reveals three to five unprotected exposure windows in a typical enterprise AI deployment.

Classify data by sensitivity before selecting encryption. Not every AI workload requires FHE. Segment your data: PII and regulated health or financial data warrant the strongest in-use protection. Less sensitive operational data may be adequately protected by TEEs alone.

Deploy TEEs immediately for sensitive inference workloads. Intel SGX, AMD SEV-SNP, and ARM TrustZone provide hardware-isolated execution environments available in major cloud providers today. This closes the most critical data-in-use gaps without waiting for FHE maturity.

Integrate DSPM tools into your AI platform governance layer. Data Security Posture Management continuously scans for misconfigurations, unencrypted data exposures, and policy violations across your AI infrastructure. Think of it as continuous compliance auditing rather than point-in-time snapshots.

Pair encryption with role-based access controls (RBAC) and least-privilege policies. Encryption protects data if an attacker gets access. RBAC minimizes the probability of unauthorized access in the first place. Both are essential; neither is sufficient alone.

Implement cryptographic verification in RAG architectures. Retrieval-Augmented Generation (RAG) systems pull live enterprise data to inform AI responses. Encryption alone is insufficient in these environments. Runtime data exposure in RAG pipelines demands cryptographic verification at the retrieval layer, not just contractual data handling agreements with vendors.

Pro Tip: When deploying AI with zero trust security architecture alongside RAG systems, add cryptographic attestation to every retrieval request. This means the AI can verify that the data source it is querying has not been tampered with before incorporating that data into a response. Standard monitoring catches anomalies after the fact. Cryptographic attestation prevents contaminated data from entering the response pipeline at all.

For GDPR compliance specifically, encryption serves a dual purpose. It satisfies technical security obligations under Article 32, and it triggers the personal data breach notification exemption under Article 34 if encrypted data is exposed without the keys being compromised. That exception can mean the difference between a contained security incident and a public regulatory notification obligation.

HIPAA’s addressable implementation specification for encryption similarly rewards organizations that can demonstrate end-to-end protection across the data lifecycle. Regulators increasingly expect this to include the AI processing layer, not just traditional storage and transmission.

Refer to AI compliance frameworks that address both technical controls and governance processes, because regulators evaluate both when assessing an organization’s security posture after an incident.

Here is the uncomfortable truth most enterprise AI security strategies miss: encryption is a protection mechanism, not a security strategy. Organizations that treat it as a checkbox, deploying AES-256 at rest and TLS 1.3 in transit and calling their AI platform secure, are building a false sense of protection.

The breach scenarios we see repeatedly are not encryption failures in the classical sense. They are architecture failures. The data was encrypted at rest. It was encrypted in transit. But the moment it was retrieved by the AI agent, loaded into the inference context, or pulled into a RAG pipeline, it was sitting in plaintext in memory, accessible to any process with sufficient privilege.

Most enterprises have not done a runtime exposure audit of their AI platforms. They have reviewed their storage configurations and their network policies. But they have not asked: “At what moment during AI processing is our most sensitive data completely unencrypted, and who or what has access to memory at that moment?”

That question should be standard operating procedure in every enterprise AI security review. It almost never is.

The second overlooked dimension is cryptographic verification versus contractual trust. Many organizations assume that because their AI vendor signed a data processing agreement, the data is protected. Contracts govern liability. They do not protect data. Only cryptographic controls protect data. Particularly in generative AI and RAG architectures, where the system is dynamically retrieving and incorporating live enterprise data, cryptographic verification of data integrity and source authenticity is the only reliable protection.

Adaptive, hybrid security models consistently outperform static control sets. An architecture combining TEEs for immediate in-use protection, FHE for the most sensitive inference workloads, DSPM for continuous posture monitoring, and zero trust best practices for access governance creates defense in depth that no single control can achieve alone. Static encryption policies set once and reviewed annually will not keep pace with the threat landscape or the speed of AI platform evolution.

The organizations that will lead on AI security over the next three years are not the ones investing the most in any single encryption technology. They are the ones building adaptive, layered architectures that treat encryption as one control among many, combined with runtime verification, continuous monitoring, and behavioral anomaly detection across every layer of their AI stack.

Encryption strategy is only as strong as the platform that enforces it. 🏔️ Hymalaia is built from the ground up for enterprise environments where data protection, compliance, and operational scale are non-negotiable. Every AI agent deployed through Hymalaia operates within a governance framework that supports GDPR-compliance, role-based access controls, and flexible deployment across cloud, on-premise, and hybrid environments.

Whether your organization is connecting Salesforce, SharePoint, Slack, or Google Workspace, Hymalaia ensures data flows securely through retrieval-augmented generation pipelines with the controls enterprise security teams require. Explore the full range of AI platform features designed to support advanced data protection, compliance automation, and secure enterprise AI deployment at scale. If your team is ready to move from encryption strategy to operational security, Hymalaia is the platform built to get you there. Book a demo and see how secure, scalable AI works in practice.

Encryption keeps data unreadable during storage, transit, and processing, ensuring that even when an AI system misbehaves or hallucinates, exposed data is cryptographically protected. A real example: poor guardrails and unprotected data led to 1,247 PII records being leaked and $412,000 in fines.

FHE allows AI systems to compute directly on encrypted data without ever decrypting it, eliminating the primary in-use exposure window. This makes it vital for secure AI inference on regulated data like healthcare records and financial transactions.

AES-256 for data at rest, TLS 1.3 for transit, and FIPS 140-3 validated modules form the baseline; key management through AWS KMS or Azure Key Vault adds centralized, auditable control over cryptographic keys.

Advanced methods like FHE do add computational overhead, but 198 billion FHE operations per day on a single node, combined with 80x matrix operation speedups, show that enterprise-grade FHE performance is within reach for most workloads.